Hindsight is 20/20: Examining the Unintended Consequences of Containers

by Lindsay Rowntree on 20th Jun 2017 in News

The proliferation of header bidding sparked a need among publishers to adopt new technology to ease the technical load of managing these partners, or risk losing control of their revenue optimisation. At least, writes Jason Fairchild, CRO and co-founder, OpenX, this is what most publishers were led to believe as they began integrating one variety of containers or another to manage their programmatic revenue. Given the problems containers promise to solve, this evolution seems logical on the surface.

But the speed at which this technology has evolved – over 10 companies are offering at least three variations of containers without standardised guidelines – and the frenzied industry hype around header bidding has masked serious unintended consequences.

Publishers considering a container, or ones who have just jumped into the fray, need to understand these consequences and also how to retain the incremental value captured with header bidding in the first place:

Diminished control for publishers

Paradoxically, while the fundamental promise of containers is to provide better control over header bidding, containers actually limit transparency and control in very important ways.

As an example, to optimise header bidding for publishers, ad tech companies employ highly specialised yield teams that work to improve every aspect of header bidding performance. These teams work with publishers to optimise several highly nuanced and technical aspects of the implementation to squeeze out every penny of incremental yield. In some cases, publishers have improved header bidding yield by up to 50% upon incorporating these techniques.

Yield optimisation requires the technology partner to work directly with the publisher, and work with the publisher within the ad server, to tweak settings to improve results. Unfortunately for publishers, most yield teams are blind to what’s going on when put into containers. This is definitively bad for publishers and their goal of maximising yield. Containers present a technical barrier that effectively obfuscates the entire tech stack and precludes header bidding tech partners from undertaking vital yield optimisation techniques that could deliver incremental revenue.

It’s almost impossible to tune and optimise the highly sensitive programmatic technology stack from within a container. It’s like trying to tune a grand piano without accessing the strings. It can’t be done.

Lack of control (again)

Let’s address the notion of 'technology-as-a-service' in the container space. The appeal of outsourcing programmatic technology to a third party is understandable – it’s complex and tech-resource heavy. But the reality is that there are no truly neutral third parties; and this conflict will ultimately impact a publisher’s header bidding revenue.

To illustrate this point, ask the obvious question: how many neutral third-party container companies would assign a yield team to optimise another exchange’s revenue from within their container? The answer is zero. Why? Like Google controlling the entire tech stack, it comes down to a simple conflict of interest – if a competing exchange or demand source performs well, it’s bad for the container company’s revenue.

Further, proprietary container providers often have full control over implementation of third-party demand into their containers, and it’s not uncommon to see delays in integrating new demand partners. Claims of technical backlog are the norm, but make no mistake, dragging their feet to implement competing demand partners directly benefits their exchange via reduced competition (at the expense of publisher yield).

No matter how you look at it, current container companies are conflicted. Whether through lack of transparency, bias towards their own demand, or 'delays' integrating new partners into the container, these conflicts of interest, coupled with the absence of control for publishers, is just bad for publishers. With such limited control (or none at all) of their programmatic revenue, which often accounts for 50% or more of their overall revenue, publishers shouldn’t outsource their core business to conflicted parties. Period.

Inconsistent technology and performance

Jason Fairchild, CRO & Co-Founder, OpenX

Containers today don’t operate in standard ways. They don’t make calls based on well-established OpenRTB protocols. There are no technology standards for containers, and all containers rely on implementations that are different for each publisher and demand partner. All of this creates non-standard results.

For publishers, whose only view of the landscape is from the point of view of their own tech stack, it may not be immediately obvious that the container impacts the performance of demand partners, and ultimately their revenue potential. An exchange’s performance can vary dramatically depending on how it is integrated with a publisher, whether that is directly on their page, or from inside a container. These performance discrepancies are a significant issue.

Publishers should test header partners inside and outside of containers. Get the data. If the performance is less for a partner inside the container than directly on the page, ask why the container dilutes performance for that partner. Is there bias where there shouldn’t be? Hold them accountable. A demand partner’s performance should not be different purely from being inside versus outside of a container, if the container is behaving the way it should in theory.

Reduced transparency

Transparency is key, yet most client-side containers do not provide adequate transparency. Containers exist to help publishers manage partners, not mediate and apply decisioning logic – that is the job of the publisher’s ad server. And, with programmatic accounting for a majority of publisher revenue, the need for transparency has increased exponentially.

When considering container solutions, publishers should demand 100% transparency and use this checklist to keep their container honest:

Ensure the container facilitates maximum participation and fair competition. All bids should be sent to DFP, where they will have an equal shot at winning, unlike how some solutions conduct the container auction and send in the winning result to DFP.

Review the default settings and make a manual change if needed. All client-side containers come with default settings that favour the container’s own demand by calling them first. For example, the default Prebid setting is to call demand partners in alphabetical order, giving some companies a clear advantage.

Take control of optimisation and time-outs. Containers handle the vital setting of auction start times differently; and there is no clear industry standard. Setting the start times for a publisher gives the 'tech-as-a-service' provider a benefit over third parties, and they often end up optimising their own demand (either on purpose or otherwise).

Ask about additional fees to ensure economic transparency. Some exchanges, that may also operate containers, charge buyer fees on their own demand and don’t disclose this to publishers. Get the fee structure disclosed in writing.

Limited quality, fraud, and counterfeit protections

Universal quality controls are not features included in containers, meaning that publishers must manage the quality settings of each exchange separately. It also means that publishers are receiving a radically different level of quality and brand protection. Not all exchanges have made the necessary investments to address the complex issues around traffic and ad quality.

Additionally, containers have enabled a new generation of fraud – domain spoofing – by facilitating massive impression redundancy and a huge number of new middlemen. DSPs often see the same impression from dozens of sources — many from traffic sources without advanced TQ technology — and this dynamic has given cover to bad actors who are counterfeiting tier-one publisher inventory. Their mislabelled inventory passes through the vulnerable header bidders masked as a premium domain, resulting in a massive dilution of inventory value.

Domain spoofing has escalated to a multimillion dollar problem for some publishers. It is money that buyers think they are spending on premium placements, but in fact aren’t. Not only will this problem diminish confidence among buyers, but it will also harm the ability of publishers to secure the true value of their inventory.

The four auction problem

Programmatic marketplaces are designed as second-price auctions because this method is proven to be superior in terms of incentivising buyers to bid 'true value' for an impression, while only paying the second-highest bidder’s bid price. This approach is modelled after paid search, which is the original online ad auction and the gold standard. The problem is that second-price auctions should not be combined with downstream subsequent auctions; and therein lies the problem.

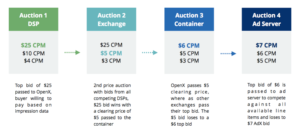

Here’s how the 'Four Auction Problem' plays out in practice:

Source: OpenX

The problem is that the buyer valued that impression (user) at USD$25 CPM and LOST to a USD$6 CPM bid in the third auction, which ultimately lost to a USD$7 CPM bid in the fourth auction. They lost this impression without any transparency into what’s happening, or why – meaning they are unable to adjust their strategy accordingly. This outcome is bad for publishers that would prefer to get the USD$25 CPM and equally bad for buyers because these high-value impressions are vitally important to make their advertiser’s campaigns work.

Containers create an entirely new layer (or layers) between buyers and sellers that obscures what’s going on and makes it impossible for DSPs to adjust their bids to drive win rates up against the users they value the most.

The takeaway here is that with programmatic now driving a significant portion of revenue, publishers should demand hard data and transparency from all container providers. Demand specific SLAs for onboarding third-party demand sources. Execute a rigorous 'trust but verify' approach to each container relationship by evaluating the performance data and understanding the technical behaviour of each container, and know how those dynamics impact yield. Hold your container provider accountable.

Ad FraudHeader BiddingPublisher

Follow ExchangeWire