Cars in Japan Targeted to Drive Campaigns

Cars on the roads of Japan can potentially be used to drive advertising campaigns, enabling brands to push customised content to targeted audience groups.

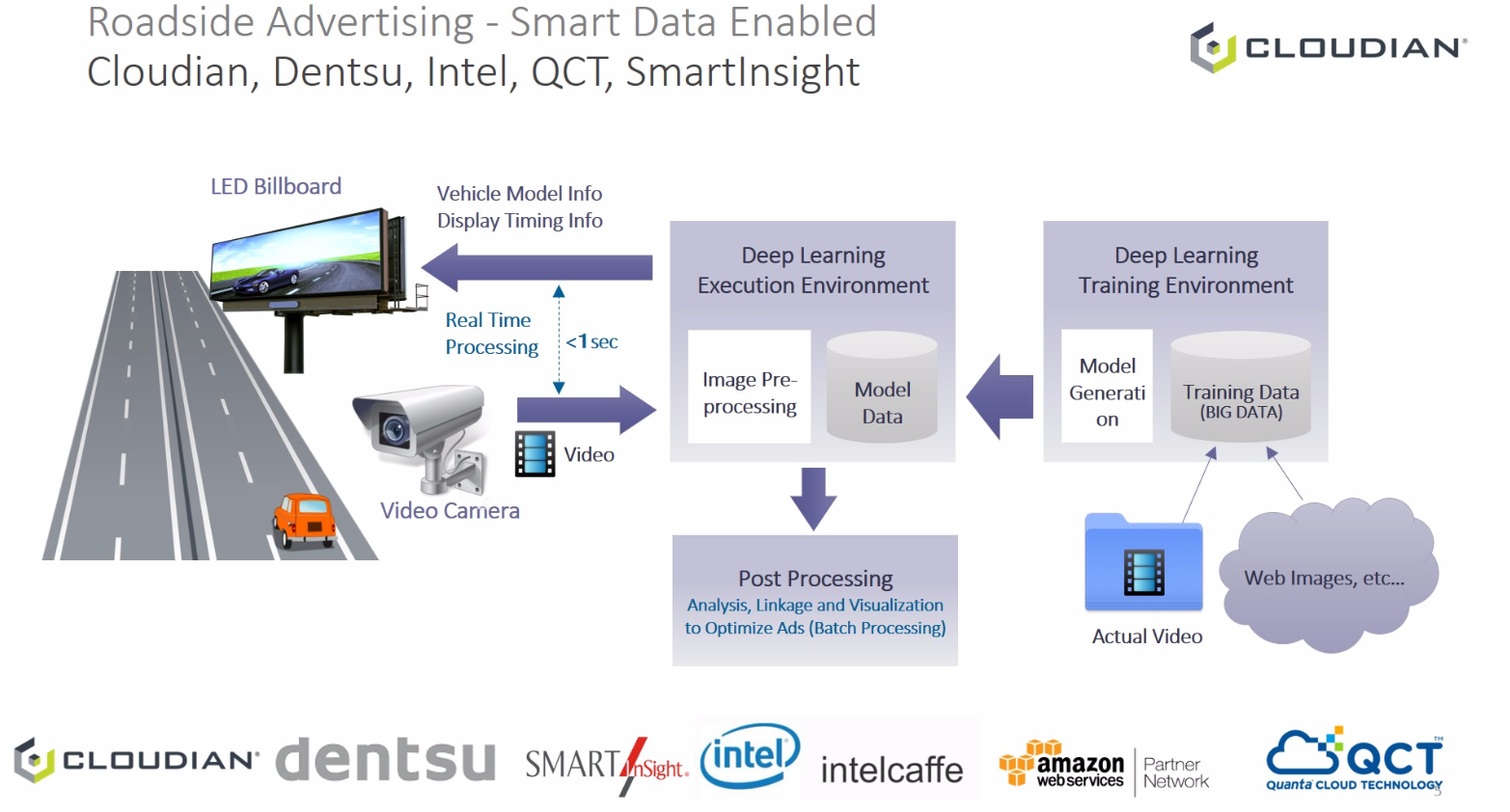

Cloudian and Dentsu have been working on a pilot that uses visual recognition to identify vehicles based on their brand and model make. Using data analysis and artificial intelligence tools, the system then would be able to push custom ads – displayed on roadside billboards – to drivers of targeted vehicles.

The system's ability to recognise a vehicle's make via camera images was built up by Cloudian's HyperStore software, which training data comprised a huge volume of vehicle data, images, and videos of car models, as well as vehicle attribute inputs. The application also was able to capture real-time data of traffic volumes at various times during the day. In future, this information could be made available to local municipalities and government agencies, such as Ministry of Land, Infrastructure, and Tourism, as well as business to enable retail location planning.

Developed by cloud storage vendor Cloudian, which chose Dentsu as its creative partner in Japan, the system has been trained with up to 5,000 images per car model using data, such as that from used car sites, to ascertain information about the vehicle such as its model, brand name, and year of manufacture.

Based on its training thus far, the system would be able to identify "several hundreds" of different car models, said Ichiro Jinnai, Dentsu's director of out-of-home media service division. Upon identifying the vehicle model, custom ads could then be displayed on a nearby billboard based on the likely profile of the vehicle's driver, he said, in a phone interview with ExchangeWire.

A driver of a hybrid or electric vehicle model, for instance, could be served ads for environment-friendly products. The processing time needed to identify a car model after its image was captured via the camera was less than one second.

In the works for the past six months, the pilot also involved other technology partners including Intel Japan, Quanta Cloud Technology (QCT) Japan, and SmartInsight. The plan was to expand the trial to more areas within the Asian market, including highways, shopping malls, carparks, and places of interest.

Jinnai said the project was being tested at a location in the Roppongi district and would soon be deployed at another test site.

According to Cloudian president and co-founder Hiroshi Ohta, the system currently was clocking an average 99% accuracy rate. The ability to accurately recognise vehicles, though, was more challenging with certain types from car manufacturers that shared similar OEM models, Ohta explained.

Asked how the system would decide which ads to display since there would be multiple cars travelling on the same road at the same time, Jinnai said this would depend on the advertisers and audience, associated with the type of cars driven, they wanted to target. They also could decide to push ads based on the most number of vehicles of a certain make or brand present in that location.

He explained that one of the primary objectives of the project was to bridge the targeting capabilities of programmatic ads to the physical world, especially as advertisers now recognised the impact of using dynamic digital ads.

For publishers, the system also could add value to billboards, offering targeted audience ads that that were no longer static but native and dynamic, he said.

As the project was still in its pilot phase, various components, such as the duration of the ad delivery and audience profile associated with car types, were still being tested and tweaked to deliver the best results.

Cars could be identified from a distance of 600 to 700 metres, with a potential viewable video ad time of about five seconds for highway billboards, Jinnai said, while acknowledging that brand messages used here would have be conveyed within the short time targeted car drivers were at the targeted location.

Potential for wider deployment

In this aspect, the system could offer more potential benefits in other locations, such as the carparks of shopping malls, noted Ohta, where advertisers would have access to more relevant data to establish audience profile, such as shopping patterns, as well as longer viewable ad time.

Retail ads, for instance, could be displayed at the entrance of the carpark or on digital signage within parking spaces. Again, in such deployment, custom ads could be displayed based on the type of cars identified in the carpark building, Ohta explained.

He added that, with the system's deep learning capabilities, more information could be used to build better customer profiles and drive marketing efforts. For instance, the system could recognise the area from which the vehicle originated based on its number plate, or measure the time the customer spent in the mall by the carpark entry and exit time.

Such ideas and their potential to support campaigns were currently explored, he said.

Jinnai added that the project partners currently were exploring options with some shopping malls, but had yet to begin testing in such locations. This was targeted for the second phase of the pilot.

Ohta revealed one challenge they faced was the inability to collect data at night, when the vehicle's headlights made detection difficult. He said Cloudian had identified some potential ways to resolve this issue, but would first need to test them.

The project's focus, so far, had been on improving the system's detection capabilities and integration work had begun to link the key components, including the billboard ads, cameras, and video analysis systems, he noted.

Testing on this integrated system would begin in August, with results from the test to be generated in September, he added. Should the test results proved positive, the project would be rolled out commercially.

Cloudian chief marketing officer Paul Turner said that with the focus, over the past six months, on improving the system's ad targeting capabilities, the analytics would enable the delivery of more viewable and targeted real-time ads.

Ad TechAdvertiserAgencyAnalyticsAPACAudioDataPublisherTargetingVideo

Follow ExchangeWire